How SREs Are Using LLMs to Detect Anomalies Before Alerts Fire

Traditional alerting is reactive by design.

CPU crosses a threshold.

Latency breaches a limit.

Error rate spikes.

Alert fires only after users are already impacted.

In 2026, advanced SRE teams are moving earlier in the timeline –

using LLMs to detect anomalies before alerts ever trigger.

Why Threshold-Based Alerting Is Too Late

Static alerts struggle because:

- Workloads are highly dynamic

- Traffic patterns change hourly

- Seasonal behavior shifts metrics baselines

- Autoscaling masks early signals

- Microservices amplify small deviations

A metric can be technically “healthy” while the system is already drifting toward failure.

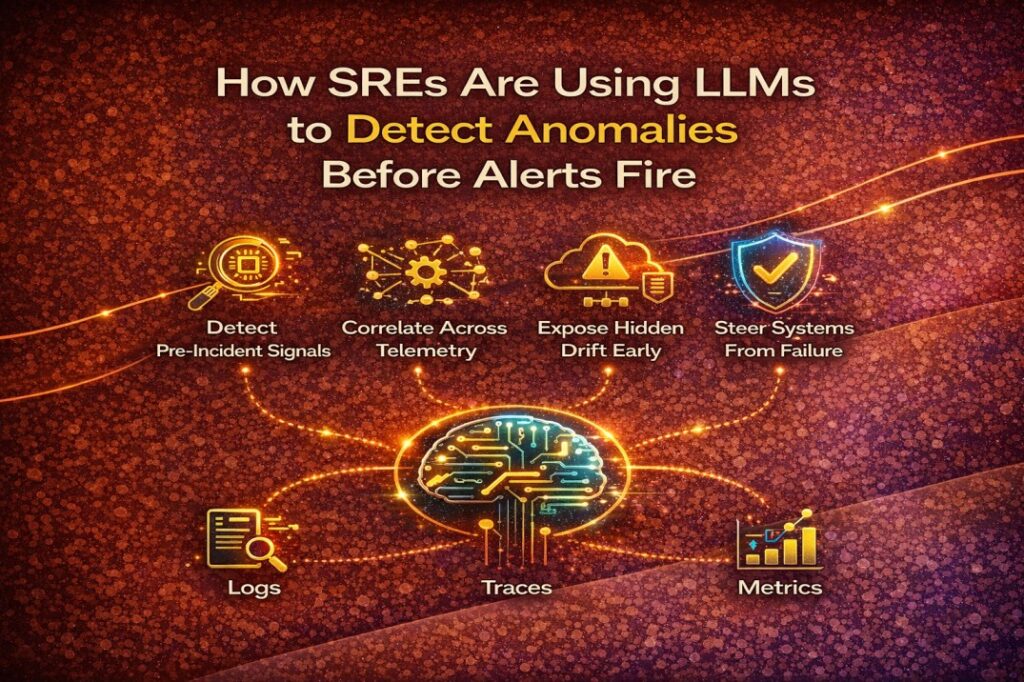

What LLMs Do Differently

LLMs don’t rely on fixed thresholds.

They analyze patterns across signals, such as:

- Metric shape changes (trend, slope, volatility)

- Log semantics (new error phrases, subtle warnings)

- Trace timing shifts (latency distribution skew)

- Event sequences (restarts → throttling → retries)

- Recent config or deployment changes

Instead of asking “Did X exceed Y?”,

LLMs ask “Does this look abnormal compared to system behavior?”

Early Anomaly Signals SREs Care About

Before alerts fire, LLMs can detect:

- Slow resource saturation trends

- Retry storms forming silently

- Latency tail inflation (P95 → P99 shift)

- Memory pressure patterns before OOMKills

- Abnormal pod churn in specific namespaces

- New log patterns never seen before

- Config changes correlated with subtle degradation

These are pre-incident signals, not incidents yet.

The Role of Telemetry Correlation

LLMs become powerful only when they see all signals together:

- Metrics (Prometheus)

- Logs (Loki)

- Traces (Tempo)

- Kubernetes events

- Deployment & config changes

- Scaling and scheduling behavior

This is where platforms like KubeHA matter –

providing correlated telemetry, not siloed data.

LLMs don’t guess.

They reason over evidence already connected.

Real SRE Workflow (Before vs After)

Traditional

- Alert fires

- Scramble to find context

- Manual correlation

- Delayed RCA

LLM-assisted

- Anomaly flagged early

- “This pattern resembles a memory leak + config change”

- Supporting metrics and logs linked

- Preventive action taken

- No alert, no outage

This is incident prevention, not response.

Why This Changes On-Call Life

Early anomaly detection:

- Reduces false positives

- Prevents cascading failures

- Cuts noise during peak hours

- Lowers MTTR by eliminating guesswork

- Reduces alert fatigue dramatically

SREs stop firefighting and start steering systems away from failure.

Common Pitfalls Teams Must Avoid

LLMs fail when teams:

- Feed only metrics without logs/traces

- Skip change data (deploys, config diffs)

- Treat LLM output as truth instead of signal

- Don’t validate against historical behavior

LLMs augment SRE judgment – they don’t replace it.

Alerts tell you something is already broken.

LLMs help you see something is about to break.

In modern Kubernetes environments:

- Anomalies appear before alerts

- Correlation matters more than thresholds

- Prevention beats response

The most mature SRE teams in 2026 use LLMs as early-warning systems, not just incident explainers.

- LLM-driven anomaly detection

- Log-metric-trace correlation

- Kubernetes change impact analysis

- AI-assisted SRE workflows

- Practical, production-grade reliability patterns

Follow KubeHA

Experience KubeHA today: www.KubeHA.com

KubeHA’s introduction, https://www.youtube.com/watch?v=PyzTQPLGaD0

#DevOps #sre #monitoring #observability #remediation #Automation #kubeha #IncidentResponse #AlertRecovery #prometheus #opentelemetry #grafana, #loki #tempo #trivy #slack #Efficiency #ITOps #SaaS #ContinuousImprovement #Kubernetes #TechInnovation #StreamlineOperations #ReducedDowntime #Reliability #ScriptingFreedom #MultiPlatform #SystemAvailability #srexperts23 #sredevops #DevOpsAutomation #EfficientOps #OptimizePerformance #Logs #Metrics #Traces #ZeroCode