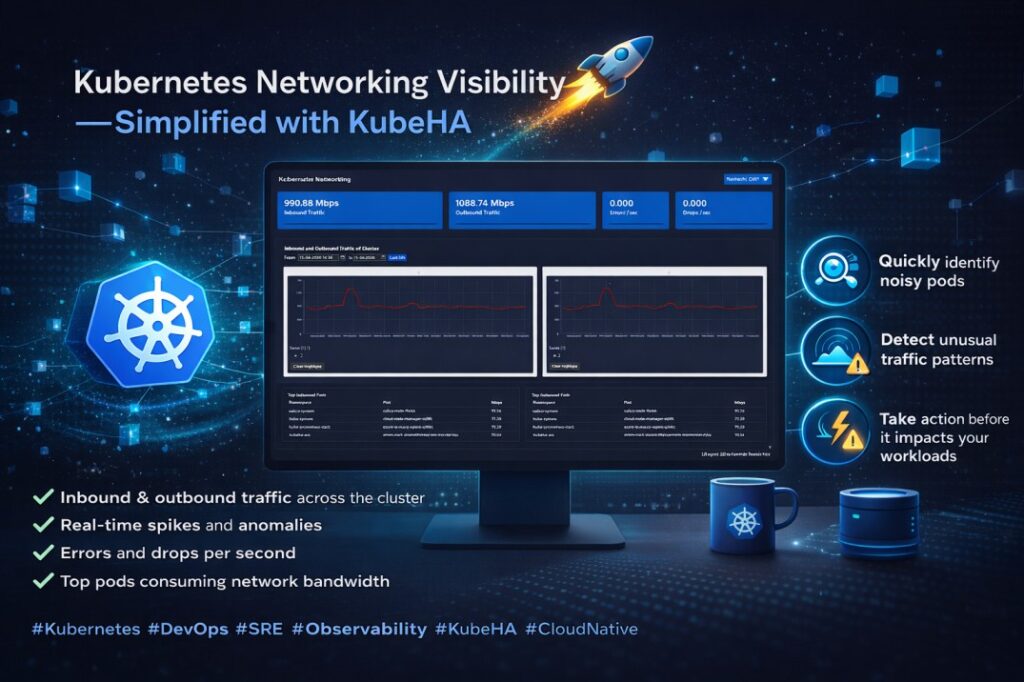

Now Test KubeHA Easily on Minikube

You can now install and test KubeHA directly on a local Minikube environment using a single command. ✅ No public IP required✅ No HTTPS/domain setup required✅ Perfect for local Kubernetes testing and POCs✅ Quick way to explore KubeHA capabilities before production deployment If your Kubernetes cluster and KubeHA are both running inside the same […]

Now Test KubeHA Easily on Minikube Read More »