Everyone thinks HPA solves traffic spikes.

It doesn’t.

Here’s the uncomfortable truth:

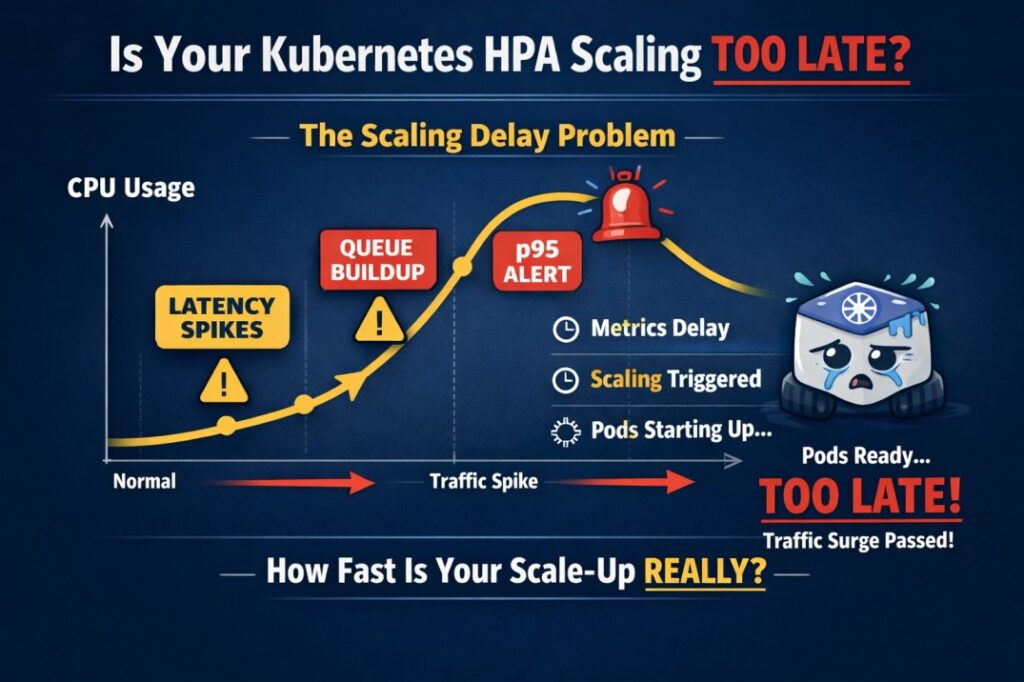

Kubernetes HPA is reactive, not predictive.

By the time CPU hits 80%:

- Your latency is already rising

- Your p95 is exploding

- Queues are forming

- Users are feeling it

Why?

Because HPA:

• Works on averaged metrics

• Depends on scrape intervals

• Responds after saturation begins

• Takes pod startup time into account

👉 So scaling decision = delayed

👉 Pod ready = further delayed

👉 Traffic peak = already passed

That’s why many teams say:

“Autoscaling didn’t help during peak hours.”

Here’s what advanced teams do instead:

✅ Scale on RPS or queue depth

✅ Use custom metrics

✅ Set realistic resource requests

✅ Reduce container cold start time

✅ Use predictive scaling (or buffer pods)

If your scaling only reacts to CPU, you’re already late.

Question for SREs:

How long does your cluster actually take from scale trigger → ready pod?

(If you don’t know – you should.)

Follow KubeHA(https://lnkd.in/gGmRDs77) for deeper insights on cloud-native reliability, cost control, and modern DevOps strategies.

Book a demo today athttps://lnkd.in/dytfT3kk

Experience KubeHA today:www.KubeHA.com

KubeHA’s introduction,https://lnkd.in/gjK5QD3i

#DevOps #sre #monitoring #observability #remediation #Automation #kubeha #IncidentResponse #AlertRecovery #prometheus #opentelemetry #grafana, #loki #tempo #trivy #slack #Efficiency #ITOps #SaaS #ContinuousImprovement #Kubernetes #TechInnovation #StreamlineOperations #ReducedDowntime #Reliability #ScriptingFreedom #MultiPlatform #SystemAvailability #srexperts23 #sredevops #DevOpsAutomation #EfficientOps #OptimizePerformance #Logs #Metrics #Traces #ZeroCode