Your Kubernetes Skills Don’t Matter If You Can’t Debug Under Pressure.

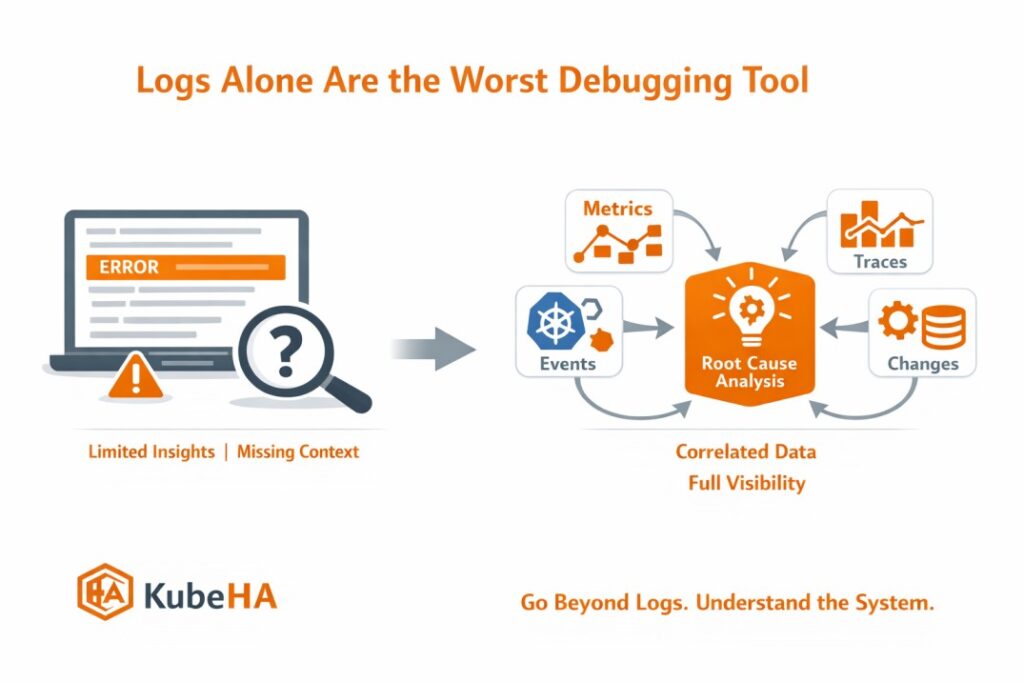

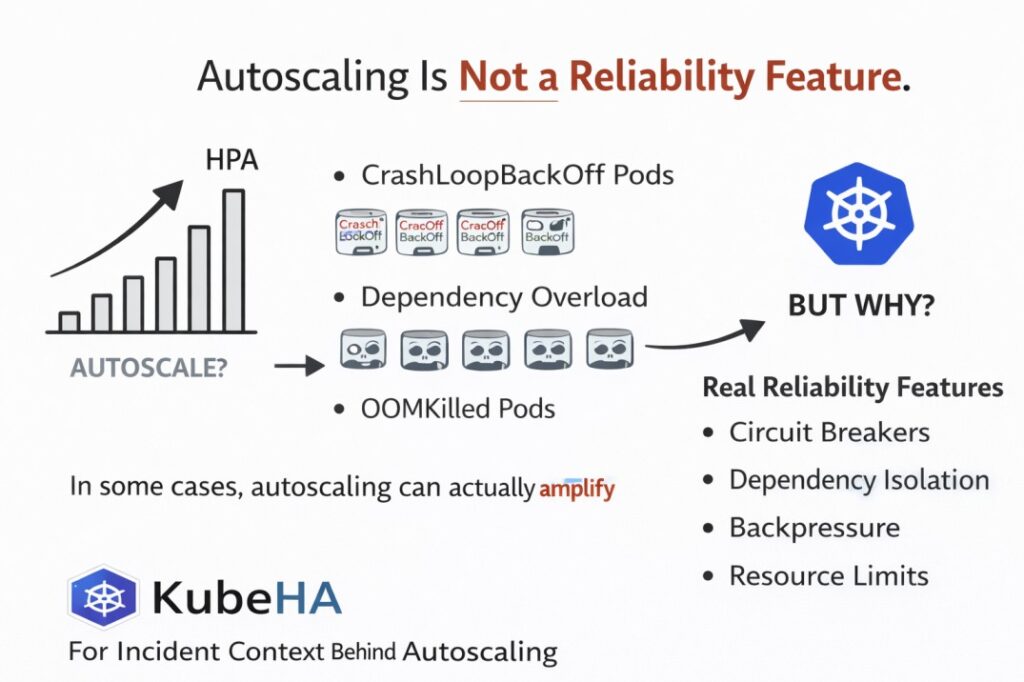

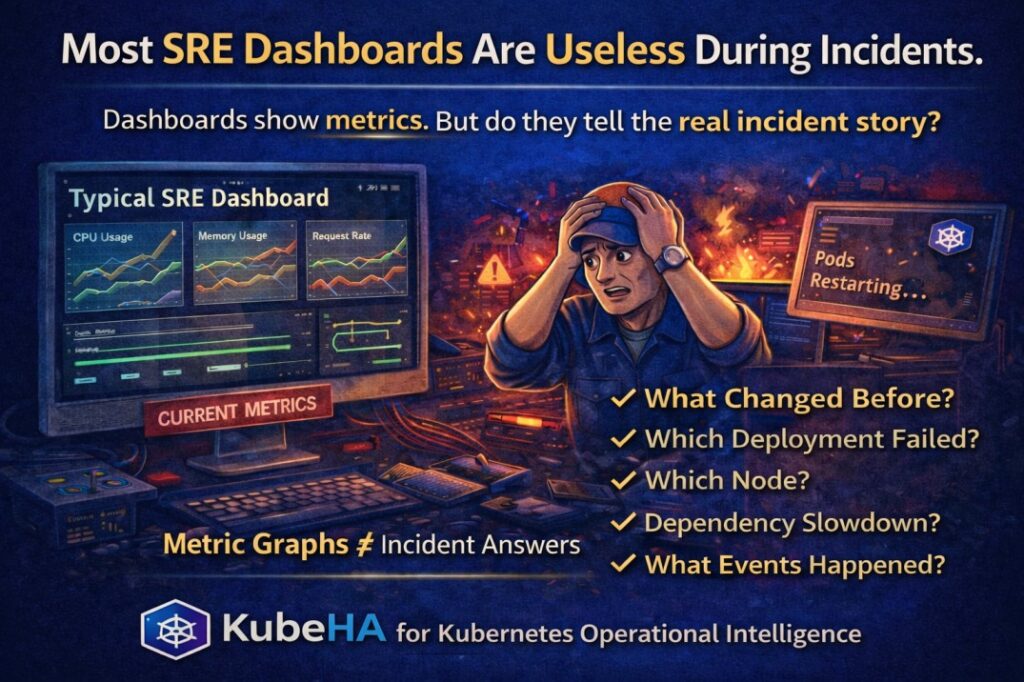

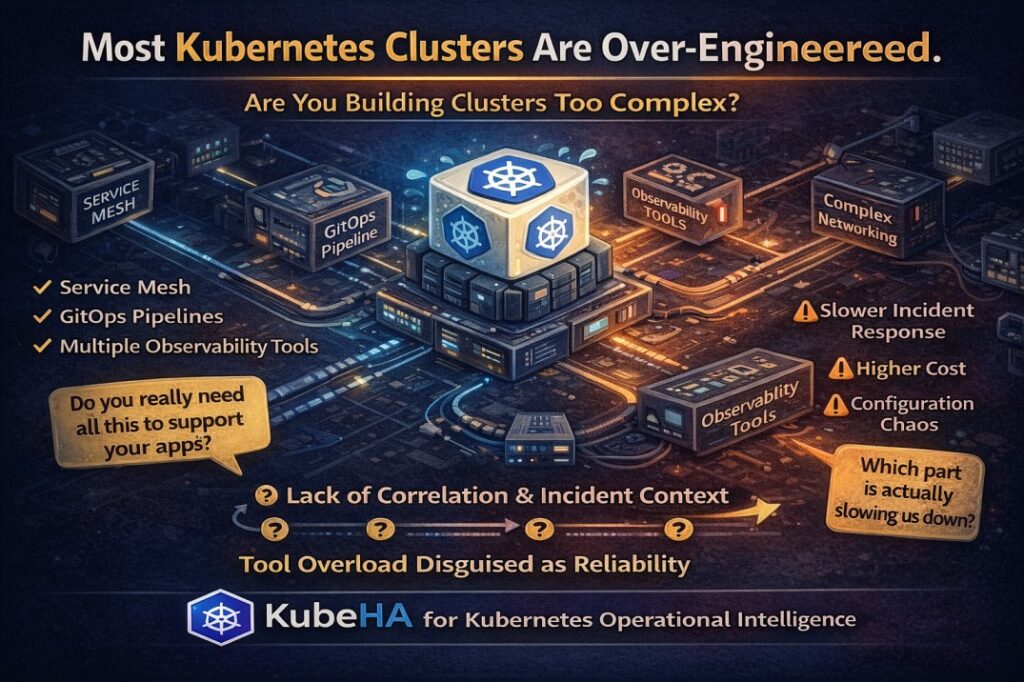

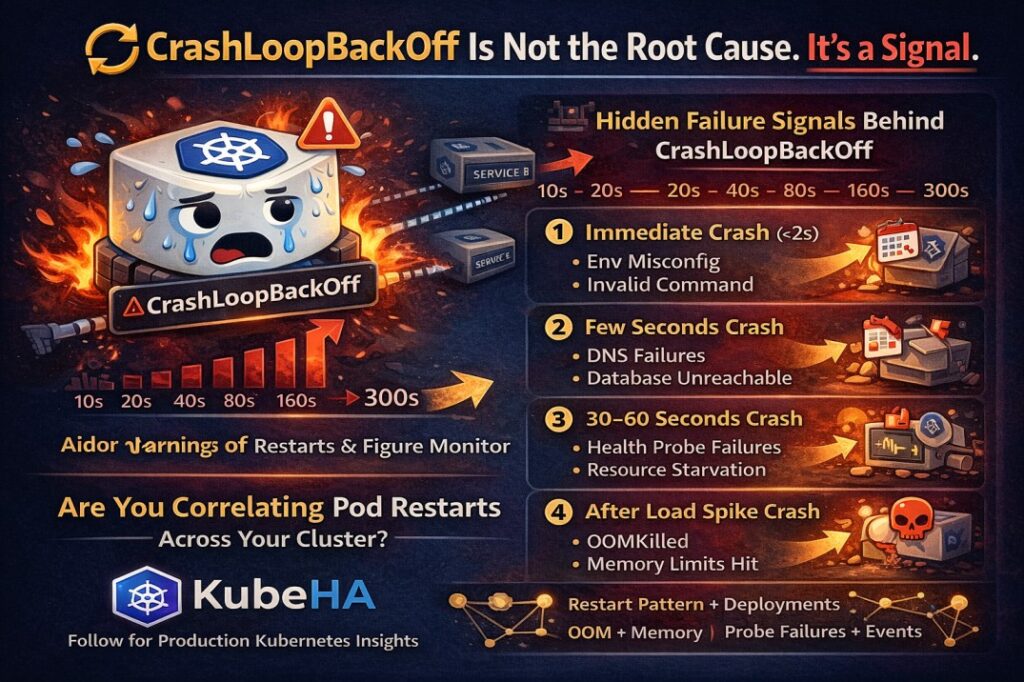

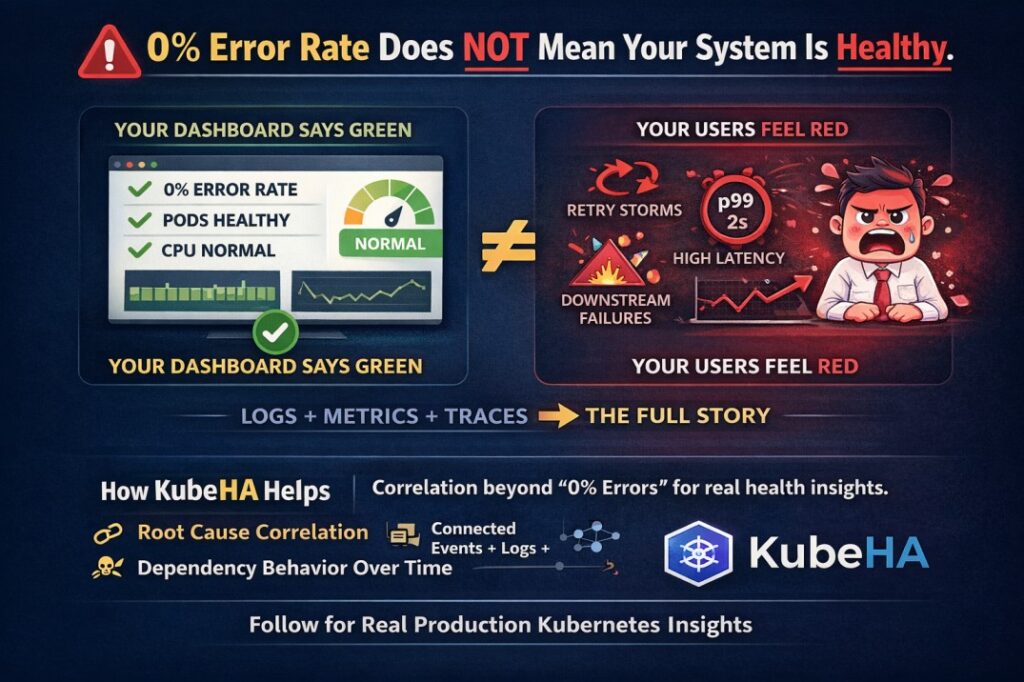

You can write perfect YAML.You know Helm, HPA, networking, storage. But during an incident? That knowledge is rarely the problem. Reality of Production Incidents In real outages, you don’t get time to think slowly. You face: • incomplete data• noisy alerts• multiple failing components• pressure from stakeholders The challenge is not what you know. It’s […]

Your Kubernetes Skills Don’t Matter If You Can’t Debug Under Pressure. Read More »