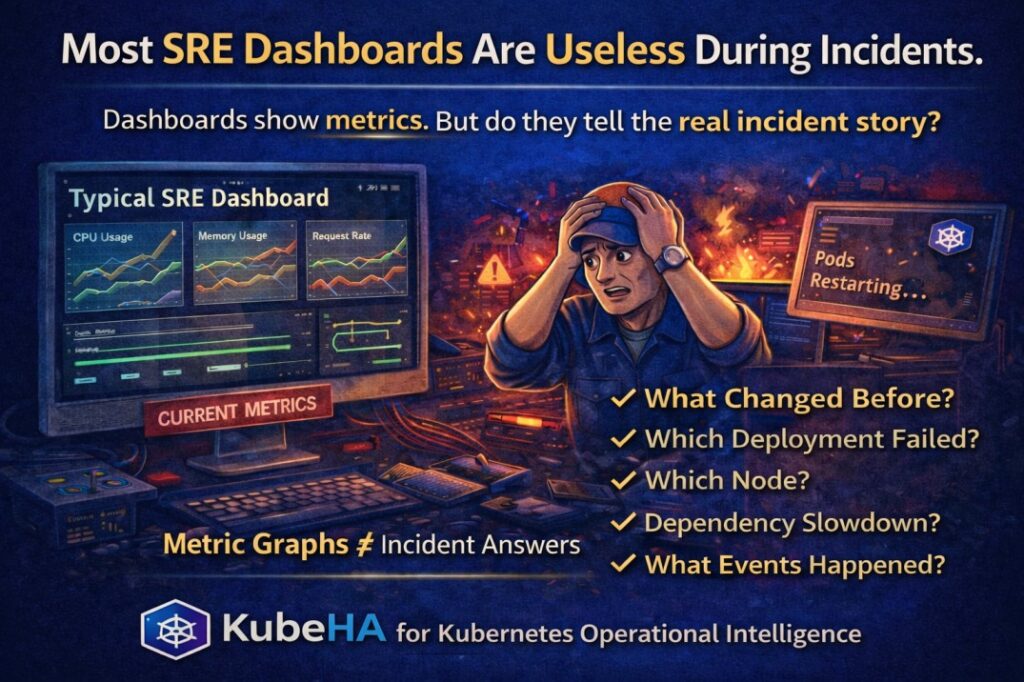

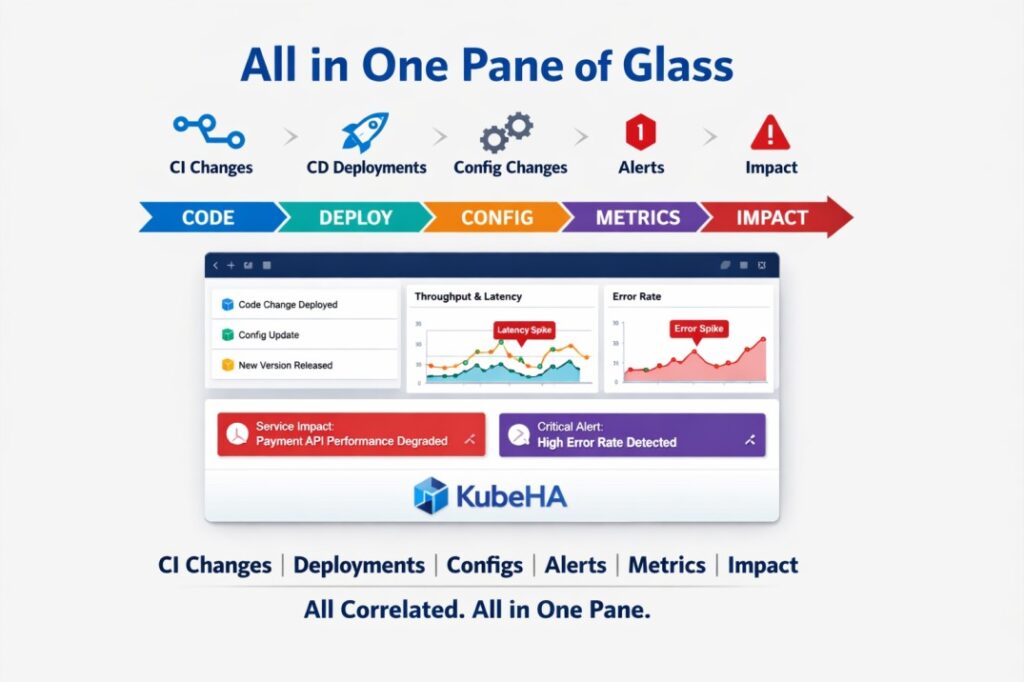

Most SRE Dashboards Are Useless During Incidents.

This might sound harsh, but many SREs will agree. During an incident, nobody is calmly staring at dashboards. Engineers are usually running: kubectl logskubectl describekubectl get events Why? Because dashboards mostly show metrics, not context. A typical dashboard tells you: CPU usage Memory usage Request rate But incidents require answers like: • What […]

Most SRE Dashboards Are Useless During Incidents. Read More »